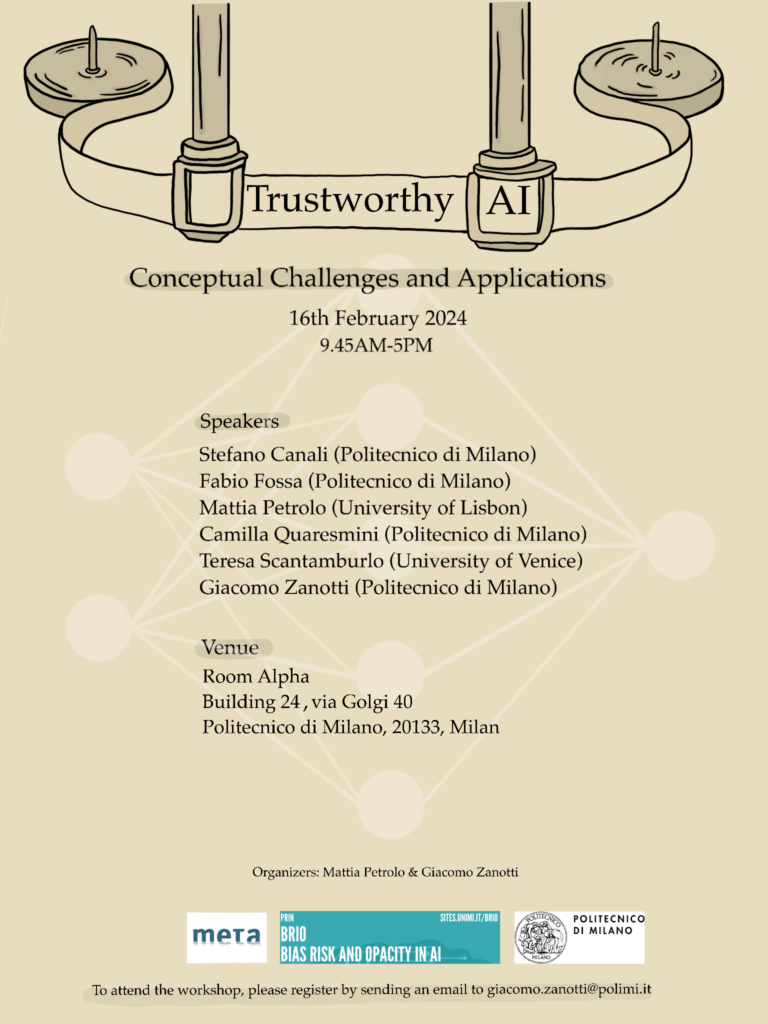

16 February, 2024, Politecnico di Milano, Milano (Italy)

This workshop is intended to investigate the multifaceted landscape of trustworthy AI, exploring its conceptual challenges and real-world applications. The focus is on fostering discussions regarding epistemological and ethical considerations, and the societal implications of AI systems.

Key questions to be addressed include: Is trustworthiness the most apt notion for framing our interaction with AI systems? What relations exist between trustworthy, reliable, and responsible AI? What practical and conceptual advantages arise from trustworthy AI systems? Furthermore, we will examine how trustworthy AI contributes to mitigating bias and managing risks in sensitive fields.

The workshop is supported by Politecnico di Milano, META, and the PRIN project BRIO – BIAS, RISK, OPACITY in AI.

Speakers

Stefano Canali (Politecnico di Milano)

Fabio Fossa (Politecnico di Milano)

Mattia Petrolo (University of Lisbon)

Camilla Quaresmini (Politecnico di Milano)

Teresa Scantamburlo (University of Venice)

Giacomo Zanotti (Politecnico di Milano)

Conference venue

Room Alpha, Building 24

Politecnico di Milano,

Via Golgi 40, 20133 Milano.

Program

9:45 – 10:00: Opening

Part I: Conceptual challenges

10:00 – 10:45: F. Fossa Trust, reliance, and the game of semantic extension

10:45 – 11:30: M. Petrolo e G. Zanotti To trust, or not to trust, that is the question

11:30 – 11:45: Break

11:45 – 12:30: T. Scantamburlo Moral exercises for human oversight of AI systems

12:30 – 14:15: Lunch break

Part II: Applications

14:15 – 15:00: S. Canali Medical AI and health-related internet of things: Emerging trade-offs from personalization

15:00 – 15:45: C. Quaresmini In-processing fairness for Trustworthy AI

15:45 – 16:00: Break

16:00 – 16:30: Roundtable on Trustworthy AI

Attending the workshop is free, but since space is limited we ask participants to register by sending an email to giacomo.zanotti@polimi.it

To enquire about the workshop, please contact the organizers at mpetrolo@fc.ul.pt and giacomo.zanotti@polimi.it